The Science Behind Modeling Materials at the Atomic Scale

by Carey Sargent, EPFL, NCCR MARVEL

He should know. Along with the more than 40 principal investigators across 12 Swiss Institutions involved in the NCCR, he’s using molecular modelling techniques based on the principles of quantum mechanics established in the last century to transform and accelerate the design and discovery of new materials, serving fields as diverse as energy production and watchmaking in the process.

“We can use these models to predict electrical performance in a transistor or the hardness of a piece of diamond, for example,” he said. “We can explore possibilities that have never been tried in a laboratory, and this at a speed that has nothing to do with the human speed of a researcher needing to synthesize a molecule and test it, but rather with the brisk speed at which computers improve.”

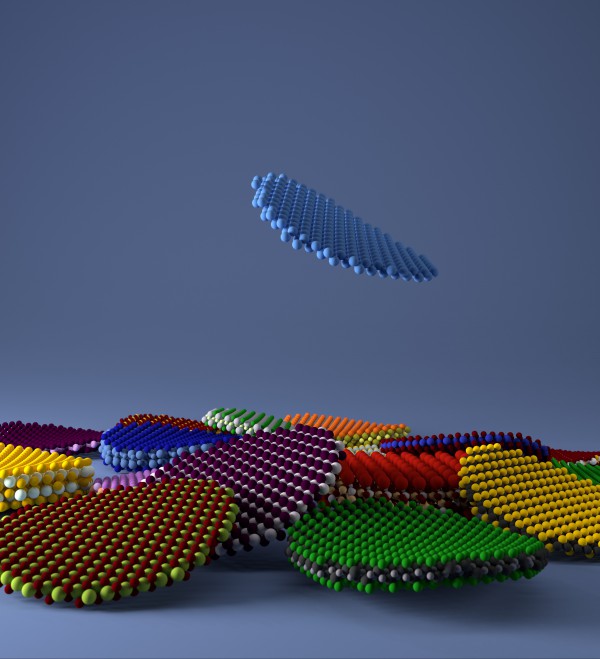

Computational discovery of novel 2D materials © Giovanni Pizzi, EPFL

It all started back in the 1920’s when scientists such as Erwin Schrödinger and Werner Heisenberg—both attendees of the famous Solvay Conferences — and then later Paul Dirac developed and refined simple equations to describe the wavelike behavior of small particles such as electrons. The Schrödinger equation for example describes the form of the probability waves governing the motion of such particles and specifies how they are altered by external influences. Because chemical bonding is nothing other than the constructive interference of such waves, these equations can reveal how wavelike objects come together to form molecules, solids or other matter as well as many of the resulting properties. There’s only one catch: these simple equations are very difficult to solve.

We can explore possibilities that have never been tried in a laboratory, and this at a speed that has nothing to do with the human speed of a researcher needing to synthesize a molecule and test it, but rather with the brisk speed at which computers improve.

— Nicola Marzari, NCCR MARVEL Director

Here’s why: describing a wave in the sea would simply require knowing its height at every point; describing electron waves entails knowing the equivalent of this height, usually called amplitude, at every point for every electron combination in the system. In a simple example, where a single electron could be say in 1000 different positions inside a small box, we need to know its amplitude at every point, i.e. 1000 numbers. If we have two electrons though, we need to know the 1000 amplitudes of one electron for every one of the 1000 positions of the other. That is, one million numbers.

“When you start to look at something like 26 electrons, which is the number of electrons in an atom of iron, this requires us to know 1078 amplitudes — a number so large that is actually comparable to the number of atoms in the entire universe — for fans of big numbers, this is referred to as ten quadrillion vigintillion, by the way,” Marzari said. “The questions of quantum mechanics are simple, but the informational content explodes very quickly.”

One big breakthrough in solving these equations came, certainly, when machines replaced the people employed to do the complex calculations. It was an exceptional human however — Walter Kohn, who later won the Nobel Prize — who provided the insight needed to make quantum mechanics easier to compute. The theory he invented is called density-functional theory (DFT), because he showed that it is sufficient to use the density of the electrons, rather than their amplitudes, to solve the equations of quantum mechanics.

I would never trust a simulation to capture the entire complexity of the real world.

— Nicola Marzari, NCCR MARVEL Director

Now, the density of electrons can be represented with a number at any point in space, and it doesn’t matter if you have 1, 26, or a quadrillion electrons — the informational content doesn’t change. This allowed scientists to circumvent the data explosion linked to dealing with each electron individually. Interestingly, the DFT equations were predicted to exist, but no one knows them exactly — they involve an unknown, and that’s where the fun begins: approximations to this unknown have gotten better and better over the years, and are now accurate enough to allow researchers (such as those at MARVEL — an acronym of Materials' Revolution) to predict how atoms come together in molecules, solids or any piece of inorganic matter, and what the resulting properties are. They can predict the power of the battery in your phone, the color of a shiny metal in your watch, or the strength of a new alloy in your car. Though this modelling doesn’t mean they can skip experiments altogether, it speeds up immensely the discovery process.

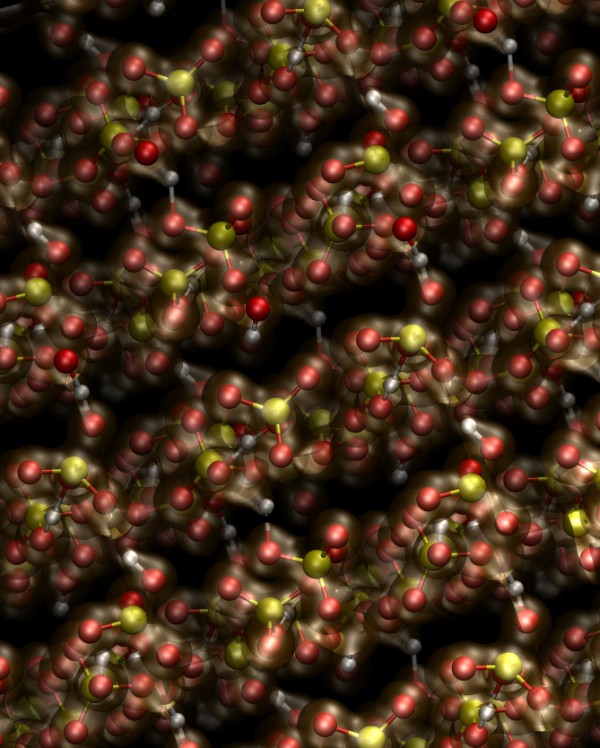

Hydrogen-bonded superprotonic conductor CsHSO4

“It’s not that we’re no longer doing experiments,” Marzari said. “I would never trust a simulation to capture the entire complexity of the real world. It allows us though to rapidly test hypotheses, dig into worldwide data or search among all the compounds studied up to now to find that rare gem that has the optimal performance for the needs at hand.”

One gem that was demonstrated by such modelling techniques was finding a new catalyst for the synthesis of ammonia. Ammonia is absolutely critical to modern life — we need it to produce fertilizers, and it’s only through fertilizers that we can feed 7 billion people. The current industrial process to produce ammonia was discovered by trial and error — and a lot of trial and error, with chemist Alwin Mittasch trying 22'000 compounds in the lab before settling on the iron alloy still used today. In 2009, Jens Norskov in Stanford used computational high-throughput approaches — aided by physical intuition — to explore an even larger space of possibilities and, ultimately, discover an even better catalyst.

“This tells us that even for the most important chemical reaction in the world, there are still huge possibilities to improve on our needs by searching for novel materials solutions,” Marzari said.

Low-volume newsletters, targeted to the scientific and industrial communities.

Subscribe to our newsletter